AI didn’t kill the craft. It exposed it.

Each year, I make a small ritual out of watching Dr. Werner Vogels’ AWS keynote.

Yes, there’s always a layer of product announcements and positioning. But if you strip away the sales coating, there’s usually a deeper engineering signal underneath.

This article is inspired by what I took from this year’s talk. Sadly, his last keynote.

Every so often, the same headline returns.

“Developers are done.”

“Anyone can build software now.” (React, then No-Code, then AI)

It’s a tempting story, because it feels clean.

And disruption always wants a clean narrative.

But history is annoying. It rarely follows the disruption narrative we want.

Assembly programmers were told compilers would replace them.

Instead, compilers raised abstraction and created entire industries.

Ops engineers were told cloud automation would replace them.

Instead, cloud lowered the cost of experimentation and enabled more companies to be founded.

Tools don’t erase developers. They raise the floor. Then the ceiling moves.

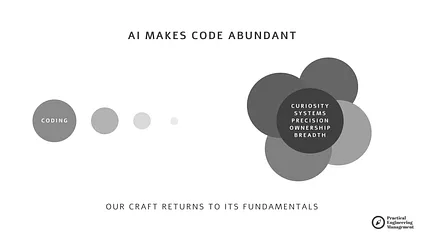

AI is doing exactly that. It makes code cheap.

Which makes judgment expensive.

The uncomfortable truth

Generative AI can generate software in seconds.

And still fail at the only part that matters: defining why this system exists, what must be true, and what cannot break.

AI isn’t in your budget meeting.

It doesn’t feel the tension between cost and latency.

It doesn’t understand that:

- your customer support system needs four 9s

- your internal dashboard can be down during peak sales

- “make it fast” sometimes means “make it cheap”

- “ship it” sometimes means “cover my political risk”

AI does not read between lines. It reads the lines.

And if you give it garbage, it returns convincing garbage.

So the future developer isn’t the one who can type. It’s the one who can:

- clarify constraints

- design mechanisms

- own consequences

- think in systems

- build across disciplines

That’s the Renaissance Engineer. Not a romantic archetype. A survival strategy for everyone who plans to stay in software engineering for longer.

The 5 Pillars of the Renaissance Engineer

Based on the keynote, I built a framework that continually reminds us what a Renaissance Engineer is.

1) Curiosity as a professional discipline

Curiosity isn’t a personality trait anymore. It’s operational hygiene.

AI accelerates execution so much that the real risk is not moving too slowly.

It’s confidently moving in the wrong direction.

Most engineering waste I see today comes from:

- shipping before understanding

- optimizing before validating

- automating before questioning

Curiosity interrupts that loop.

Not abstract curiosity. Structured curiosity.

What that looks like in practice:

Replace “research spikes” with learning loops

Instead of:

“Let’s investigate AI usage.”

Define:

- one specific question

- one experiment that answers it

- one artifact capturing the result

Example:

Not: Can we use AI for support automation?

Better: Can AI draft Tier-1 responses that reduce response time by 20% without increasing escalation rate?

That changes behavior immediately.

Expose engineers to consequences

Metrics dashboards don’t teach empathy. User friction does.

If engineers regularly:

- review support tickets

- sit in customer calls

- observe production incidents end-to-end

they naturally ask better questions before building.

Curiosity grows fastest where feedback is unavoidable.

Frameworks for you

You can use these frameworks to structure your curiosity:

- The “User-Friendly” Engineering Framework - use to reframe from shipping the code to reason about users’ confusion, mental models, and behavior.

- The Friction Board - use to understand the difference between what users think is happening vs what is actually happening in the system.

2) Systems thinking as default engineering posture

Software failures rarely originate where they appear.

- Latency problems often start with org design (Conway’s Law).

- Quality problems often start with incentives.

- Security problems often start with unclear ownership.

If you only optimize the component, the system compensates elsewhere.

That’s why AI adoption sometimes paradoxically slows teams down.

Output increases.

Coordination overhead increases faster.

Classic balancing loop.

How to operationalize systems thinking:

Map incentives, not just architecture

When diagnosing recurring issues, ask:

- What behavior does the current system reward?

- What behavior does it punish?

- Where does information arrive too late?

Example:

If teams are rewarded for feature velocity but not reliability, incidents aren’t a technical problem.

They’re a system design outcome.

Look for second-order effects explicitly

Before introducing a tool, process, or AI workflow, write:

- Immediate benefit

- Likely unintended consequence

- What metric will detect that consequence early

This avoids the common “we solved one bottleneck, created three others” pattern.

Frameworks for you

- On Designing Systems, Not Just Shipping Code

- Data → Information → Knowledge → Wisdom (DIKW) - How to Be Data Driven Engineering Leader

3) Precision communication as engineering infrastructure

In an AI-augmented environment, ambiguity becomes technical debt.

Not metaphorically. Literally.

Every vague requirement becomes:

- hallucinated code

- misaligned architecture

- rework cycles

- hidden operational risk

And unlike traditional code debt, ambiguity compounds invisibly.

Practical ways to raise precision:

Adopt constraint-first specifications

Most specs start with what we want.

Better specs start with:

- What must never happen.

- What tradeoffs are acceptable.

- Where flexibility exists.

This mirrors real engineering constraints:

- cost ceilings

- latency tolerances

- compliance boundaries

- operational realities

AI responds dramatically better to constraints than aspirations.

So do humans.

Standardize “definition of done” beyond code

Include:

- observability readiness

- operational runbooks

- cost awareness

- rollback strategy

Without this, teams ship artifacts, not systems.

Frameworks for you

4) Ownership beyond delivery

Ownership used to mean: “My code works.”

Now it increasingly means: “This system behaves responsibly in the real world.”

AI accelerates delivery but doesn’t absorb liability. That stays human. And it’s where senior engineers distinguish themselves.

How ownership becomes visible:

Follow features into production life

After launch:

- Who watches error budgets?

- Who monitors cost drift?

- Who tracks user confusion signals?

- Who owns model behavior over time?

If the answer is “nobody specifically,” ownership is performative.

Institutionalize stop-the-line authority

Borrowed from manufacturing, but increasingly critical for software:

Anyone should be able to:

- pause deployment

- escalate risk

- block release

…without social penalty.

If escalation is culturally risky, problems hide until they explode.

Frameworks for you

- The Problem Solving Framework

- The Three Ways: Flow, Feedback, and Continuous Learning - Road to a DevOps Culture

- You Build It, You Run It

5) Polymathy as leverage, not hobby

Interdisciplinary knowledge isn’t about being interesting. It’s about reducing blind spots.

AI already handles narrow specialization extremely well. Human advantage shifts toward:

- integration

- context interpretation

- tradeoff navigation

That requires breadth.

Not shallow browsing. Functional literacy across domains.

Making breadth practical:

Build adjacent domain literacy intentionally

Engineers don’t need to become:

- product managers

- designers

- finance experts

But they should understand:

- how product decisions are made

- how cost structures shape architecture

- how users interpret interfaces

- how regulations shape constraints

This prevents technically elegant but strategically useless solutions.

Connect technical decisions to business impact explicitly

Before major engineering work, articulate:

- Which business metric changes?

- What customer behavior shifts?

- What risk is reduced?

If you can’t answer that, you’re probably optimizing locally.

Not systemically.

Frameworks for you

The real shift: AI in the human loop

Werner’s “ AI won’t replace you, but it will make you irrelevant if you don’t evolve ” lands because it’s specific.

AI pushes the role up the stack:

- Less typing.

- More verification.

- More intent.

- More systems thinking.

Which means:

If you were primarily paid for output, you’re exposed.

If you’re paid for judgment, you’re essential.

And here’s the kicker:

Lowering barriers does not reduce demand. It increases it.

Because once software becomes easier to build… More people try to build more things.

And complexity grows.

Final thought

AI will make code abundant. So the craft returns to its fundamentals:

- curiosity

- systems

- precision

- ownership

- breadth

Not what you know. What you keep learning. And what you choose to be responsible for.